I asked Jensen Huang the CEO of Nvidia if Automous Vehicles need to have self-aware networks.

I wanted whether it will be necessary for Self Driving Cars to become Self-Aware Networks with a Self-Concept, and I wanted to know about…

I wanted whether it will be necessary for Self Driving Cars to become Self-Aware Networks with a Self-Concept, and I wanted to know about combining the Drive Sim & Constellation System with the Clara the new Medical Imagining Super Computing Platform to make it easier for researchers to establish next generation neural correlations for example. Listen to his reply with the audio clip at the end (My Q&A with Jensen Huang is at the bottom)

Written by Micah Blumberg, Silicon Valley Global News SVGN.io and host of the Neural Lace Podcast VRMA.io

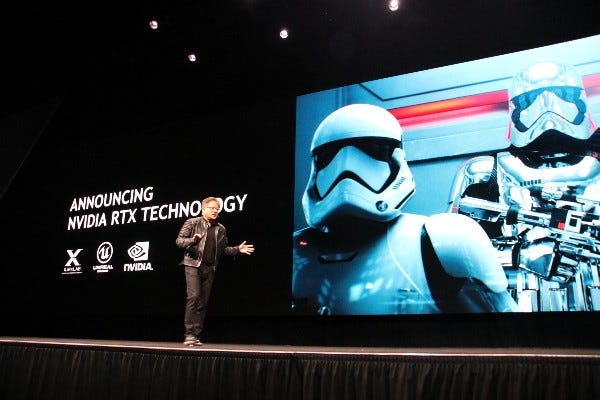

At GTC 2018 Nvidia introduced real time ray tracing to the world, it’s called RTX, and it uses a technology called AI denoising to predict the Rays from the point cloud that the scene consists of. This AI denoising technology was previously demonstrated by OTOY and you can see my interview with the CEO of Otoy Jules Urbach here.

My interview with Jules Urbach the CEO of OTOY at GTC 2018

We talked about some of the big ideas behind the big news about RTX Real Time Ray Tracing, we talked specifically about…medium.com

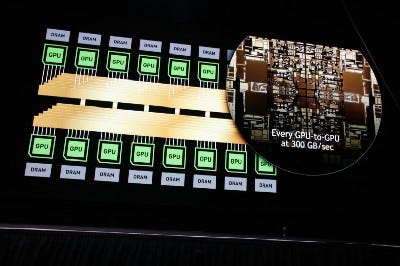

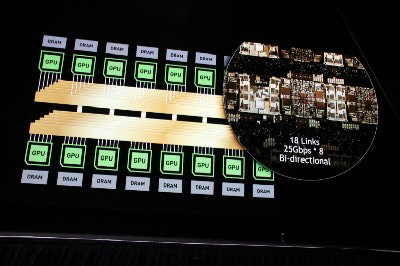

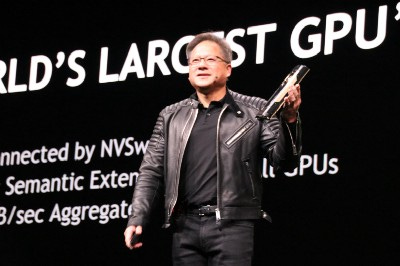

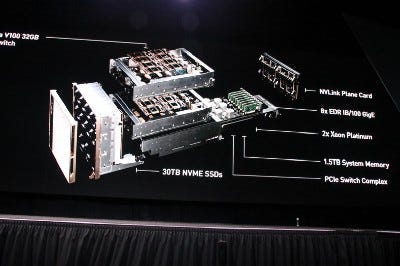

Nvidia also launched it’s brand new AI platform the DGX 2 which has 2 Petaflops of computing with 512GB of HBM2 Memory, it supports up to 16GPUs it’s 350lbs and it only needs 10kW. Nvidia also introduced it’s new Drive Sim & Constellation and Clara the new Medical Imaging Super Computing Platform. Here is a link to more information about Clara https://blogs.nvidia.com/blog/2018/03/28/ai-healthcare-gtc/

Jensen Huang, the CEO of Nvidia, is known for saying funny lines during his keynotes that get his audience laughing. He says things like “The more you buy, the more you save” which I think could be a nod to the fact that a CEO’s number one job is to sell their companies products, but it also drives the point home that Nvidia’s computer architectures are shrinking the computer server farms and doing a lot more with a lot less energy, a lot less cost, and lot less space. Every time I see Jensen Huang speak I expect amazing things, and he always delivers, one of these days I expect a humanoid robot will walk out on stage with him, like from the movie iRobot, if there a list of people on this planet who are most likely to accomplish building a self-aware robot with humanoid like sentient brain in our life times Jensen Huang would be a good candidate.

In addition technology that had previously only been featured in the movie Black Panther was realized in real life in this keynote:

“Are you guys putting this in your head right now, there is Tim in reality, but he is inside Virtual Reality controlling a car that’s in reality but it’s not here!” Jensen Huang says and then a little bit later he adds “This is like three layers of Inception right now” referencing the model exploding in the movie Inception

See my full article on that here with video I recorded from the first row of the audience:

Nvidia demo: Engineer drives a real car from inside VR

Nvidia combines its Virtual Reality Holodeck application with Self Driving Cars so a human can take over a vehicle…medium.com

So after the keynote in the Press Room I asked the CEO Jensen Huang the following. Included is an audio recording of my questions and his response.

I have three really easy questions one.

I noticed that you have a feedback loop between the Drive Simulation and Pegasys the Driving Computer, and I think the brain is a feedback loop so, could it be important to feed the self driving car information about itself so it gets a self concept? Does it currently have a self concept? When does it become a self-aware network?

Can we combine the Clara Medical Imagine Super Computer with the Data from the Drive Simulation so we can establish next generation neural correlation between the medical imagery and the world that the car sees

Why aren’t cryptocurrency miners using DGX systems and if we market the DGX to cryptocurrency miners won’t they leave our VR Graphics Cards alone?

Here is a text summary paraphrasing Jensen Huang’s answer to my questions, you also listen to my questions and hear his response below that:

[Cryptocurrency mining doesn’t need as much of a system architecture as the DGX offers, an AI system, the streaming storage and processing, the system storage all of that is very very different from a cryptomining system.

[The first question, do we feed it in as a feedback loop (between Pegasys and Drive Simulation) that’s a good observation. One box is basically the eyes, the eyes that see the world, the world generator that’s the first image generator, the second is the brain, which is the section that is the reasoning and the planning part of our autonomous vehicle, that’s the world and the brain, so constellation has those two systems integrated into it. The reason we don’t turn it free to teach itself is that we don’t want this box to be a black box, we want to know the pieces of technology that are engineered into the self driving car so that we understand the things that it does and why its doing them, and so we are building the technology in pieces. So the technology has to be very easy to understand. We don’t want a whole lot of stuff inside the blackbox, because if we can’t understand it we can’t reason how its going to be effective. The second reason is by taking these things and breaking them into small boxes we can have redundancy and diversity, we can do the same thing in a redundant way but differently. The way that you can have resilience is to have redundancy and diversity, to do the same job in different ways by different people. By using redundancy and diversity we could make that car much more resilient so that we don’t have a single point of systematic failure.]

The youtube link above is the audio capture of my exchange with Nvideo CEO Jensen Huang

Conclusion: The result was that I came away thinking of Nvidia’s Self Driving Car not as a single robot, but as an entire team of awesome robots that will be working together to overcome the failings of any single artificial mind, and if AI fails, humans will be ready to step in, via Virtual Reality